Project AIR Workflow

Project AIR workflow from Vincent Laforet on Vimeo.

For many of us, workflow is the least “sexy” part of any photo or video project. That is until you find yourself waiting forever for a copy to finish as those minutes and hours eat into the few hours of sleep you will get that night/morning… or worse: when you run out of time, and you take a shortcut (because you have to leave a location, or simply need to get some sleep) and you fail to make a redundant copy… and disaster strikes.

There are MANY ways that you can lose critical data: it can be human error (have you ever deleted some original images by mistake – I have: one was an entire job in fact… recovery can be painfully long, stressful and far from guaranteed.) Then there are the obvious dangers of impact, spills, power issues (power loss or spikes during a copy) data corruption from software, and finally hardware failure.

If you only have ONE copy of your data, it takes just one careless mistake (a TSA person dropping a drive, which I’ve seen) or a spilled coffee or software crash to eliminate a days or weeks or months worth of work – work that is often irreplaceable and near priceless. That is one of the reasons you always want to have a second copy of all of your originals safely PHYSICALLY ISOLATED from all of your other data, and from your work in progress.

Therefore I thought I would share some of the ins and outs of our workflow and data management on this project “AIR“, given the huge amount of time, planning and coordination that goes behind an international project such as AIR.

Each city that we photograph is preceded by weeks to months of coordination, and requests for special permission to fly over some of the most venerable cities in Europe. In the case of Berlin, the request went all the way to the Berlin Senate for example.

Clearly on this project (if not ANY project) DATA LOSS IS NOT AN OPTION.

The workflow video above also sets out how we configured our set up on location at the Barcelona heliport on this project.

Three Golden Rules To Workflow:

First – the project is generating a fair amount of data. For 6K shooters out there, it may not seem like much, but for still photographers we are shooting quite a large amount of data close to 750GBs on each shoot. We are shooting video with the Canon C300, 3 x GoPro 4s and 2x Canon 1DXs and the large 50mp files from the Canon 5DS – before you know it, you have a sizeable amount of data to manage from the first of two or three flights over each city.

Most importantly: the data is unusually valuable given the amount of expenses involved in capturing it, not to mention what might be the “once in a lifetime” permissions we are getting from certain cities to fly directly over them.

Therefore RELIABILITY and REDUNDANCY is the key to success. Always have MULTIPLE copies of your data in SEVERAL PLACES – that is rule number one. Never EVER have one single copy of your work. You are begging for a disaster.

Second – SPEED is an essential part of this project and big part of why we use the G-Technology drives that are some of the fastest on the market – using Thunderbolt 2 & USB 3.0.

The speed at which we need to turn images around can be critical. In Europe, it was not uncommon to edit an 8,000-9,000 frame shoot on Saturday. To shoot the following city Sunday. And to complete final edit of BOTH of those cities (nearly 18,000 RAW frames) the following day just hours prior to a release of selects to the press and to the public.

With each city, I’m shooting an average 9,000 frames in approximately 3 hours of flight – we are forced to shoot in the high speed drive mode on our cameras, as vibration is an ever present enemy with the low shutter speeds we are using at night. For every hour that I shoot in the air, I generally spend 4-7 hours on the initial pass of editing and even more when you take into account the second, third, and color correction pass.

All of this work results in a series of 35-40 images to publish on Storehouse, and 12 master images for each city. Therefore the speed of the drives is absolutely critical. A drive that is half as fast will double the amount of time that I spend behind a computer.

Also, keep in mind the other often overlooked point about the speed of drives: slower drives = more time waiting at the end of the day 0n set for the DIT to copy the data… slower drives = fewer likely copies and therefore a much greater chance of shortcuts being taken, and ultimately the loss of critical footage or images.

The third golden rule is DISCIPLINE and/or building in FAILSAFES into your workflow. Doing multiple copies in the first faze of your ingesting of data is critical. You never want to have just ONE copy of the data at any time. But setting up the correct workflow blueprint that ensures this failsafes if key.

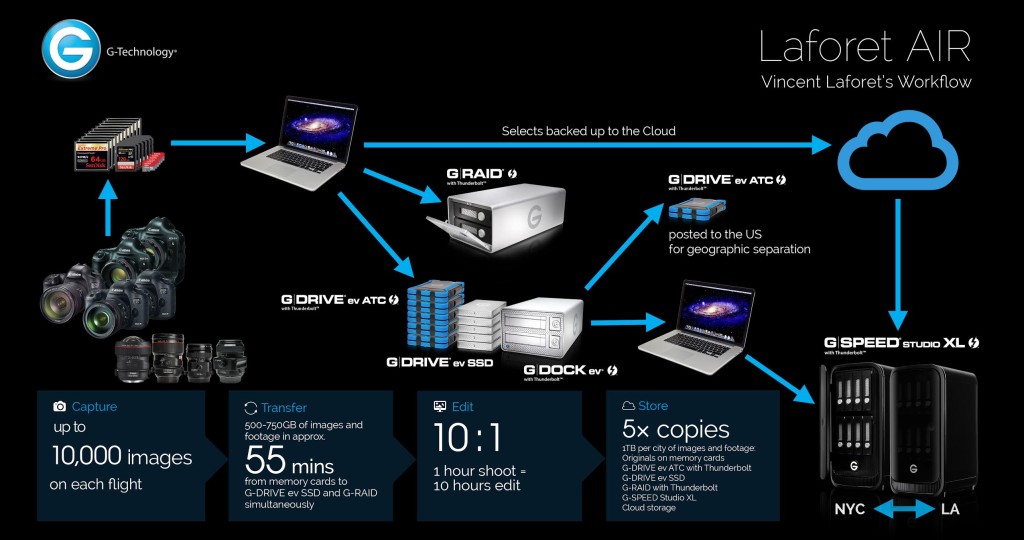

Here is an example of our workflow visually below, followed by it being spelled out in written form step by step:

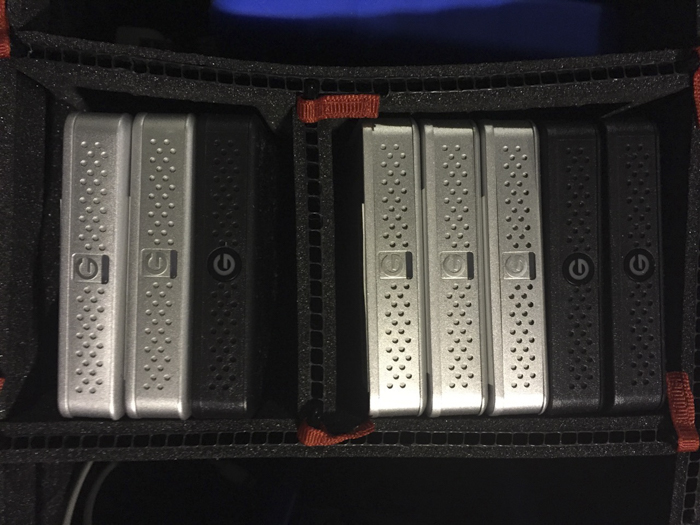

Our first target is a 512GB SSD inside of a Gtech EV Dock. The second target is a 16TB Thunderbolt G-RAID drive set to RAID 1 for added redundancy, as each of the two disks within the RAID has an identical copy of the data.

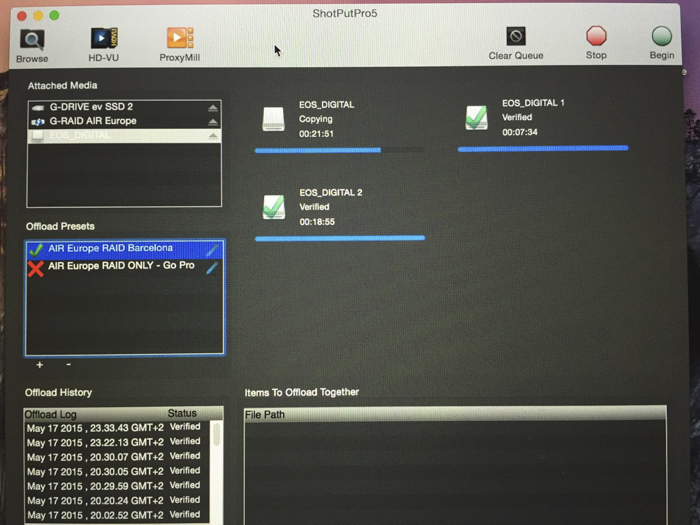

We use Shot Put Pro to copy the camera media across, and a Lexar modular HR2 hub to off load 3 CF cards (all San Disk 128GB Extreme Pro cards) and 3 Micro SD (all SanDisk 64GB Extreme PLUS cards from the Go Pros) simultaneously.

At this point we have 4 copies of the data: the original disks, the SSD, and the two drives within the GRAID.

Because there are 3 of us on the project team, we have 3 separate copies in 3 separate hotel rooms (physical locations) and regardless of the team’s size, I always have a minimum of 2 copies with two different people no matter how small the job.

Some might call this excessive but if you’re working in digital photography and/or video and you’ve ever experienced the loss of data on a job, let alone with family photos which can be even more valuable to many of us than any job, your perspective will quickly change!

Always remember that if you only have ONE copy of your data, it’s unfortunately a matter of ‘when’ not ‘if’ data corruption or loss will happen to you. Every single drive (spinning or SSD) has a life expectancy range. Impacts, data corruption, power losses or surges WILL happen. And those can be fatal to any drive in existence today.

I’ve had an extremely good track record with G-Technology for all my data storage needs both in terms of reliability, but I’ve come to depend on them just as much given the workflow they allow me to adopt, and the speed of their drives.

Below you can see an illustration of the above – that has somewhat changes since we’ve landed in Europe (some of the quantities and numbers have slightly changed which is expected.)

Hardware

Below is a detailed breakdown of the hardware and software that comprises the entire basis of Project AIR’s work flow:

G-DRIVE ev ATC with Thunderbolt (1 TB)

G-RAID with Thunderbolt 2 (16TB)

G-SPEED Studio XL with Thunderbolt (64TB)

Editing / Post-Processing:

Adobe Lightroom is the software that I do my initial editing in. I use DxO OpticsPro post-production software to manage the noise caused by shooting at such high ISOs.

Editing is a 10:1 factor on shooting time – for every hour of shooting, I end up spending about 10 hours editing the photos. A tremendous amount of work goes into image selection as I review each frame a 1:1 to ensure that each is sharp and not victim to image blur from the helicopter vibration . Were the drives half as fast, we’d be at 20:1, which would just kill us on the road with our tight turnaround times.

Our retoucher does all of the finishing work to make the photos ready for online viewing, the AIR book, and other media.

Hope this was useful, would love to hear how you are managing your data, drop me a comment here or connect to me on Twitter or Facebook and let me know.

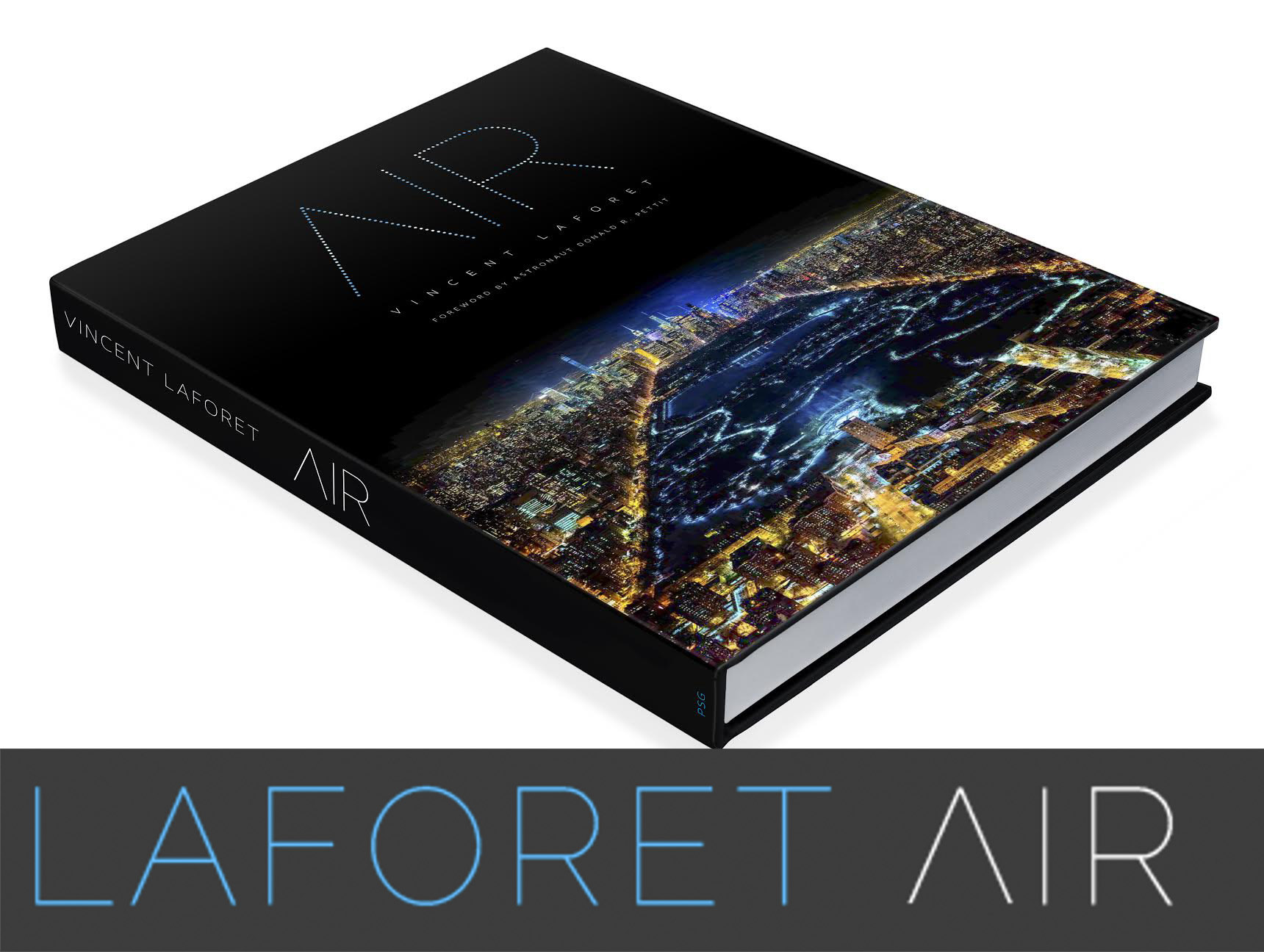

To find out more about Project AIR or to support it, and enable us to photograph more cities in the future, you can pre-oder the AIR book, or purchase postcards, lithographs, and fine art prints now at LaforetAir.com. If you’d like

Tags: gtech, gtechnology, projectair, Workflow

I sure hope you meant RAID 1, not RAID 0.

http://en.wikipedia.org/wiki/Standard_RAID_levels#RAID_1

Awesome post. Love this project. So say your budget isn’t large enough for all this storage space. What is the best step for workflow? One drive to work on. One drive for file back and one drive to back up your computer?

Thanks for the writeup, impressive and interesting! Though I think this is a mistake: “The second target is a 16TB Thunderbolt G-RAID drive set to RAID 0 for added redundancy”. Raid 0 has no redundancy, it’s increasing speed without redundancy. 1:1 redundancy would be RAID 1.

Thank you Vincent. That’s a lot of answer…

Great Day!

“The second target is a 16TB Thunderbolt G-RAID drive set to RAID 0 for added redundancy, as each of the two disks within the RAID has an identical copy of the data.” It should say “..set to RAID 1..” .. not 0 ..

Very interesting post. It would be great to see how the city scapes were processed in Lightroom.

Cool method. I believe you meant to say RAID 1 Instead of 0 in the video.

Keep up the great work.

First of all, I am so glad I did visit the Laforet meeting in Paris, the work you guys make is truly amazing and I am a big fan of your work (I just saw the photo I got from you in good daylight and I am just stunned of the sharpness and detail you can see!). I was amazed when you said you edit the pictures in post for 30s tops (hard to believe really, it look’s like you did spend hours on it). The tip to shoot it in 3000Kelvin was a nice one as well. Will definitely look into the G Technology products when I am back home, my current Seagate backup drives failed on me a couple of times and I am willing to take the next big step into a more PRO backup solution/workflow from now on. Keep up the good work! I am curious what great projects (photography/film) we can expect from your team in the future. I believe your team have put nightphotography with the city as subject back on the map but in a unique style we haven’t seen before (especially the blue glow in the photographs make it incredible interesting).

Really? You backup 268Gbytes into the cloud from your hotel room? How many months are you staying in the room?

I have never seen hotel internet speeds that would allow you to do that. Even here in my house with where we have 200 Mbit/sec theoretical upload speeds running over fibre I wouldn’t expect to do 100 Gbytes to Dropbox in less than 24 hrs.

I back up everything now! Although we all wish every budget we worked with allowed for this kind of safety precaution, the overall message is loud and clear. Back up your footage or you are asking for a world of hurt! Very informative, thanks Vincent